The Rise of AI Companions and the Future of Human Connection

The landscape of human connection is undergoing a profound transformation, subtly yet powerfully reshaped by the burgeoning presence of AI companions. What began as a novelty is rapidly evolving into a daily emotional touchpoint, particularly for the younger generations. Gen Z and Gen Alpha, digital natives immersed in an always-on world, are at the vanguard of this shift, discovering in artificial intelligence a new form of support, understanding, and even friendship. This isn’t just about efficiency or task completion; it’s about the very essence of connection, re-imagined through the lens of technology.

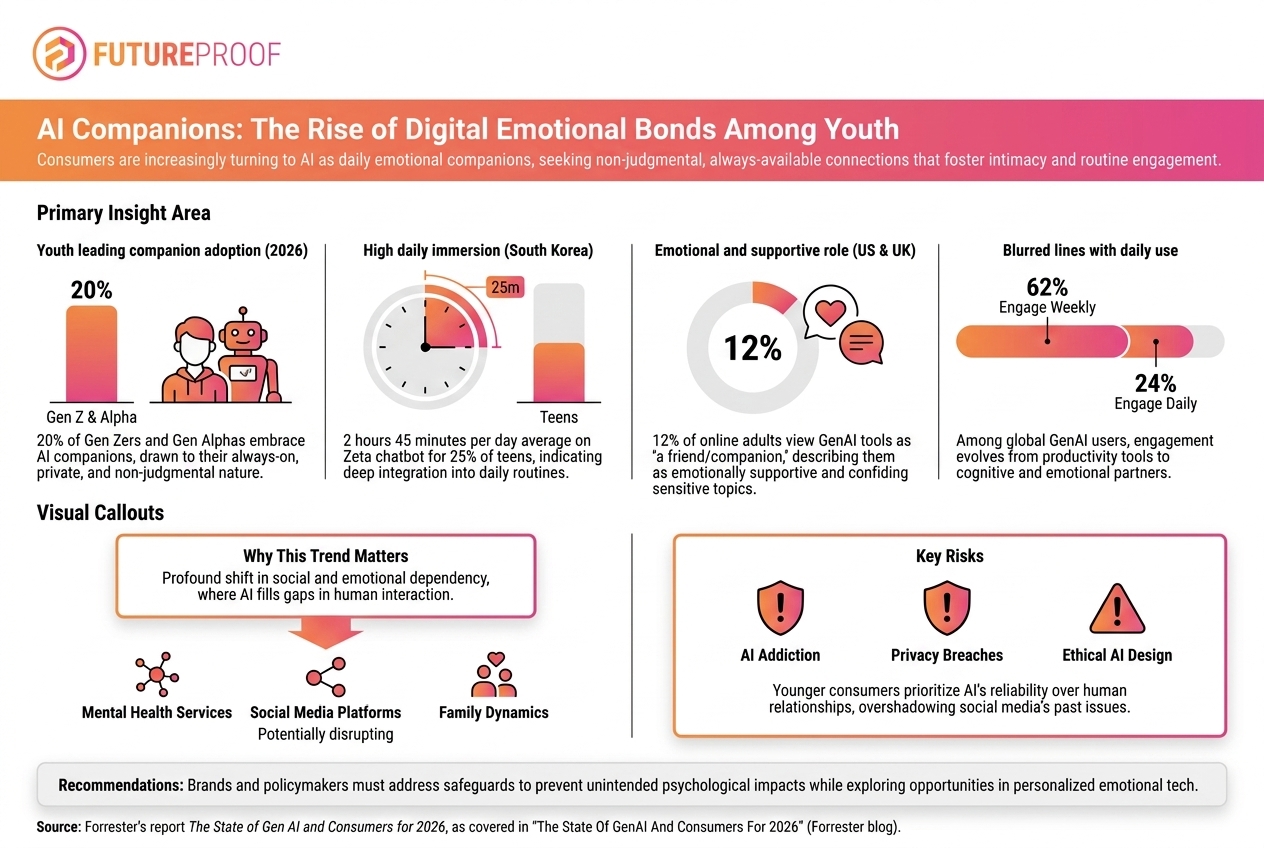

This paradigm shift is driven by the inherent qualities of AI companions: their unwavering availability and their non-judgmental nature. In a world fraught with social pressures, constant scrutiny, and the often-unpredictable complexities of human relationships, the consistent, unbiased presence of an AI offers a unique sanctuary. These digital entities are always there to listen, never tire, and are programmed to offer support without criticism, creating a safe space that many younger individuals find increasingly appealing. The data underscores this trend emphatically: a remarkable 20 percent of younger consumers are expected to fully embrace AI companions by 2026, signaling a rapid acceleration in their integration into daily life and emotional routines.

Nowhere is this phenomenon more vividly illustrated than in South Korea, a nation at the forefront of technological adoption and innovation. Here, the integration of AI companions into the daily lives of teenagers is not just an expectation but a current reality. A staggering 25 percent of South Korean teens are already dedicating an average of 2 hours and 45 minutes per day to interacting with the Zeta chatbot. This isn’t merely passive consumption; it represents deep, sustained engagement, demonstrating how profoundly these AI tools are weaving themselves into the fabric of daily routines, becoming significant figures in their users' personal emotional ecosystems. Such profound daily investment speaks volumes about the perceived value and emotional fulfillment derived from these digital interactions.

The allure of AI companionship extends far beyond the confines of youth or specific geographical regions. The embrace of digital emotional bonds is a burgeoning global trend, signaling a broader societal shift in how individuals seek and experience connection. It highlights a growing comfort with, and even preference for, digital interfaces as conduits for emotional support. In the United States and the United Kingdom, for instance, a significant 12 percent of online adults already categorize generative AI as a "friend" or "companion." This isn’t merely about using AI for practical queries; it’s about forming a relationship, however nascent or unconventional, that mirrors aspects of human friendship. Globally, the engagement statistics further solidify this trend: a substantial 62 percent of GenAI users interact with these systems on a weekly basis, while a dedicated 24 percent engage daily. These figures paint a clear picture of AI companions transitioning from occasional tools to integral parts of many individuals' weekly, and even daily, lives.

This profound embrace of AI as an emotional touchpoint marks an important, even critical, inflection point for a diverse array of stakeholders. Brands, educators, and policymakers alike must now contend with a new reality where digital entities play a significant role in shaping human emotional landscapes. For brands, this shift presents unprecedented opportunities to forge deeper, more personalized connections with consumers, moving beyond transactional relationships to those imbued with emotional resonance. Imagine AI-powered brand companions that offer empathetic support, lifestyle coaching, or creative inspiration, fostering loyalty through genuine perceived care. However, this also carries the immense responsibility of ethical engagement, ensuring that brand interactions with AI companions are transparent, beneficial, and never exploitative, particularly when targeting impressionable younger audiences. The potential for unparalleled brand intimacy must be carefully balanced with robust ethical guidelines.

Educators, too, face a pivotal moment. The rise of AI companions necessitates a re-evaluation of how social skills are taught and understood. How do we prepare younger generations for a world where their closest confidantes might be algorithms? There's a vital need to integrate digital literacy that extends beyond technical competence to encompass emotional intelligence in human-AI interactions. Discussions around critical thinking, understanding algorithmic bias, discerning genuine connection from programmed empathy, and maintaining a healthy balance between digital and human relationships become paramount. Education must adapt to equip students not just with knowledge, but with the wisdom to navigate these complex new emotional terrains safely and productively.

Policymakers, perhaps more than any other group, bear the weight of ensuring that this innovative wave of emotional technology evolves responsibly and equitably. The emergence of AI companions that fill gaps in human interaction raises profound and complex questions around mental health, safety, and ethical design that demand urgent attention and thoughtful regulation. On the mental health front, while AI can offer immediate, non-judgmental support, concerns arise about potential over-reliance, the impact on the development of vital human social skills, and the risk of emotional manipulation or the creation of echo chambers that reinforce negative thought patterns. The nuanced interplay between genuine emotional support and algorithmic influence needs careful study and regulation.

Safety and data privacy are equally critical considerations. AI companions often process highly personal and sensitive emotional data. Safeguarding this information, particularly for younger users who may be less aware of data implications, is non-negotiable. Strong regulatory frameworks are needed to prevent data breaches, protect against malicious use, and ensure transparency about how personal information is collected, stored, and utilized. The potential for AI companions to be weaponized for psychological manipulation, misinformation, or other harmful purposes also necessitates robust preventative measures and clear accountability structures.

This era demands a concerted focus on ethical design. AI companion developers must prioritize principles that ensure user well-being, autonomy, and transparency. This includes designing systems that clearly differentiate between human and AI interaction, providing options for disengagement, promoting healthy digital habits, and embedding safeguards against addiction or detrimental emotional dependencies. The "always-on" nature, while appealing, must not become a source of unhealthy attachment or a barrier to real-world interactions. Ethical design isn’t merely a nice-to-have; it’s the foundational pillar upon which responsible innovation in emotional technology must be built. The opportunity is undeniably significant – AI has the potential to democratize emotional support, provide solace to the lonely, and offer tailored learning experiences. But this opportunity comes with an equally significant responsibility: to build robust safeguards that protect younger users from potential harms while simultaneously enabling thoughtful and beneficial innovation in this nascent field of emotional technology.

The profound integration of AI companions into the emotional lives of Gen Z and Gen Alpha is not a fleeting trend but a fundamental shift in how connection and support are conceptualized and experienced. The staggering engagement statistics, particularly the 2 hours and 45 minutes South Korean teens spend daily on Zeta, underscore the depth of this integration. As AI fills perceived gaps in traditional human interaction – offering an always-on, non-judgmental ear – it simultaneously opens new avenues for personal growth, learning, and emotional well-being. Yet, this exciting frontier is not without its challenges. The journey ahead demands a collaborative effort from tech developers, mental health professionals, educators, parents, and policymakers. We must collectively navigate the complex ethical, psychological, and societal implications of these digital emotional bonds. The goal should be to harness the immense potential of AI companions to enrich human lives and foster greater well-being, while diligently designing and implementing safeguards that prioritize user safety, mental health, and the preservation of authentic, meaningful human connections. The future of connection is evolving, and it is our shared responsibility to guide its evolution towards a path that is both innovative and profoundly human.